I’ve had this WordPress domain for several years now, and in the beginning it was very convenient.

WordPress enabled me to set up a fully functional blog in a matter of hours. Everything from HTML markup, external content embedding, databases, and simple analytics was already conveniently set up.

However, after a while, I wanted to do some more advanced stuff. Here, the disadvantages of WordPress hosting became evident fast. Anything beyond the most simple capabilities is locked firmly behind paywalls. Arguably rightfully so. If you want to use WordPress’ add-ins, I feel you should pay for them. That’s their business model after all.

However, what greatly annoys me is that WordPress actively hinders you from arranging matters yourself. Want to incorporate some JavaScript in your page? Upgrade to a paid account. Want to use Google Analytics? Upgrade and buy an add-in. Want to customize your HTML / CSS code? Upgrade or be damned. Even the simplest of tasks — just downloading visitor counts — WordPress made harder than it should be.

You can download visitor statistics manually — day by day, week by week, or year by year. However, there is no way to download your visitor history in batches. If you want to have your daily visiting history, you will manually have to download and store every day’s statistics.

For me, getting historic daily data would entail 1100 times entering a date, scrolling down, clicking a button, specifying a filename, and clicking to save. I did this once, for 36 monthly data snapshots, and the insights were barely worth the hassle, I assure you.

Fortunately, today, after nearly three years of hosting on WordPress, I finally managed to circumvent past this annoyance! Using the Python script detailed below, my computer now automonously logs in to WordPress and downloads the historic daily visitor statistics for all my blogs and pages!

Let me walk you through the program and code.

Modules & Setup

Before we jump into Python, you need to install Chromedriver. Just download the zip and unpack the execution file somewhere you can find it, and make sure to copy the path into Python. You will need it later. Chromedriver allows Python’s selenium webdriver to open up and steer a chrome browser.

We need another module for browsing: webdriver_manager. The other modules and their functions are for more common purposes: os for directory management, re for regular expression, datetime for working with dates, and time for letting the computer sleep in between operations.

from selenium import webdriver

from webdriver_manager.chrome import ChromeDriverManager

from time import sleep

from datetime import datetime, timedelta

import os

import reHelper Functions

I try to write my code in functions, so let’s dive into the functions that allow us to download visitor statistics.

To begin, we need to set up a driver (i.e., automated browser) and this is what get_driver does. Two things are important here. Firstly, the function takes an argument dir_download. You need to give it a path so it knows where to put any downloaded files. This path is stored under preferences in the driver options. Secondly, you need to specify the path_chromedriver argument. This needs to be the exact location you unpacked the chromedriver.exe. All these paths you can change later in the main program, so don’t worry about them for now. The get_driver function returns a ready-to-go driver object.

def get_driver(dir_download, path_chromedriver):

chrome_options = webdriver.ChromeOptions()

prefs = {'download.default_directory': dir_download}

chrome_options.add_experimental_option('prefs', prefs)

driver = webdriver.Chrome(executable_path=path_chromedriver, options=chrome_options)

return driverNext, our driver will need to know where to browse to. So the function below, compile_traffic_url, uses an f-string to generate the url for the visitor statistics overview of a specific domain and date. Important here is that you will need to change the domain default from paulvanderlaken.com to your own WordPress adress. Take a look at the statistics overview in your regular browser to see how you may tailor your urls.

Now, in the rest of the program, I work dates formatted and stored as datetime.datetime.date(). By default, the compile_traffic_url function also uses a datetime date argument for today’s date. However, WordPress expects simple string dates in the urls. Hence, I need a way to convert these complex datetime dates into simpler strings. That’s what the strftimefunction below does. It formats a datetime date to a date_string, in the format YYYY-MM-DD.

def compile_traffic_url(domain='paulvanderlaken.com', date=datetime.today().date()):

date_string = date.strftime('%Y-%m-%d')

return f'https://wordpress.com/stats/day/posts/{domain}?startDate={date_string}'So we know how to generate the urls for the pages we want to scrape. We compile them using this handy function.

If we would let the driver browse directly to one of these compiled traffic urls, you will find yourself redirected to the WordPress login page, like below. That’s a bummer!

Hence, whenever we start our program, we will first need to log in once using our password. That’s what the signing_in function below is for. This function takes in a driver, a username, and a password. It uses the compile_traffic_url function to generate a traffic url (by default of today’s traffic [see above]). Then the driver loads the website using its get method. This will redirect us to the WordPress login page. In order for the webpages to load before our driver starts clicking away, we let our computer sleep a bit, using time.sleep.

def signing_in(driver, username, password):

print('Sign in routine')

url = compile_traffic_url()

driver.get(url)

sleep(1)

field_email = driver.find_element_by_css_selector('#usernameOrEmail')

field_email.send_keys(username)

button_submit = driver.find_element_by_class_name('button')

button_submit.click()

sleep(1)

field_password = driver.find_element_by_css_selector('#password')

field_password.send_keys(password)

button_submit = driver.find_element_by_class_name('button')

button_submit.click()

sleep(2)Now, our automated driver is looking at the WordPress login page. We need to help it find where to input the username and password. If you press CTRL+SHIFT+C while on any webpage, the HTML behind it will show. Now you can just browse over the webpage elements, like the login input fields, and see what their CSS selectors, names, and classes are.

CTRL+SHIFT+C on a webpage, the html behind it will show. So, next, I order the driver to find the HTML element of the username-input field and input my username keys into it. We ask the driver to find the Continue-button and click it. Time for the driver to sleep again, while the page loads the password input field. Afterwards, we ask the driver to find the password input field, input our password, and click the Continue-button a second time. While our automatic login completes, we let the computer sleep some more.

Once we have logged in once, we will remain logged in until the Python program ends, which closes the driver.

Okay, so now that we have a function that logs us in, let’s start downloading our visitor statistics!

The download_traffic function takes in a driver, a date, and a list of dates_downloaded (an empty list by default). First, it checks whether the date to download occurs in dates_downloaded. If so, we do not want to waste time downloading statistics we already have. Otherwise, it puts the driver to work downloading the traffic for the specified date following these steps:

- Compile url for the specified date

- Driver browses to the webpage of that url

- Computer sleeps while the webpage loads

- Driver executes script, letting it scroll down to the bottom of the webpage

- Driver is asked to find the button to download the visitor statistics in csv

- Driver clicks said button

- Computer sleeps while the csv is downloaded

If anything goes wrong during these steps, an error message is printed and no document is downloaded. With no document downloaded, our program can try again for that link the next time.

def download_traffic(driver, date, dates_downloaded=[]):

if date in dates_downloaded:

print(f'Already downloaded {date} traffic')

else:

try:

print(f'Downloading {date} traffic')

url = compile_traffic_url(date=date)

driver.get(url)

sleep(1)

driver.execute_script("window.scrollTo(0, document.body.scrollHeight);")

button = driver.find_element_by_class_name('stats-download-csv')

button.click()

sleep(1)

except:

print(f'Error during downloading of {date}')We need one more function to generate the dates_downloaded list of download_traffic. The date_from_filename function below takes in a filename (e.g., paulvanderlaken.com_posts_day_12_28_2019_12_28_2019) and searches for a regular expression date format. The found match is turned into a datetime date using strptime and returned. This allows us to walk through a directory on our computer and see for which dates we have already downloaded visitor statistics. You will see how this works in the main program below.

def date_from_filename(filename):

match = re.search(r'\d{2}_\d{2}_\d{4}', filename)

date = datetime.strptime(match.group(), '%m_%d_%Y').date()

return dateMain program

In the end, we combine all these above functions in our main program. Here you will need to change five things to make it work on your computer:

path_data– enter a folder path where you want to store the retrieved visitor statistics csv’spath_chromedriver– enter the path to the chromedriver.exe you unpackedfirst_date– enter the date from which you want to start scraping (by default up to today)username– enter your WordPress username or email addresspassword– enter your WordPress password

if __name__ == '__main__':

path_data = 'C:\\Users\\paulv\\stack\\projects\\2019_paulvanderlaken.com-anniversary\\traffic-day\\'

path_chromedriver = 'C:\\Users\\paulv\\chromedriver.exe'

first_date = datetime(2017, 1, 18).date()

last_date = datetime.today().date()

username = "insert_username"

password = "insert_password"

driver = get_driver(dir_download=path_data, path_chromedriver=path_chromedriver)

days_delta = last_date - first_date

days = [first_date + timedelta(days) for days in range(days_delta.days + 1)]

dates_downloaded = [date_from_filename(file) for _, _, f in os.walk(path_data) for file in f]

signing_in(driver, username=username, password=password)

for d in days:

download_traffic(driver, d, dates_downloaded)

driver.close()If you have downloaded Chromedriver, have copied all the code blocks from this blog into a Python script, and have added in your personal paths, usernames, and passwords, this Python program should work like a charm on your computer as well. By default, the program will scrape statistics from all days from the first_date up to the day you run the program, but this you can change obviously.

Results

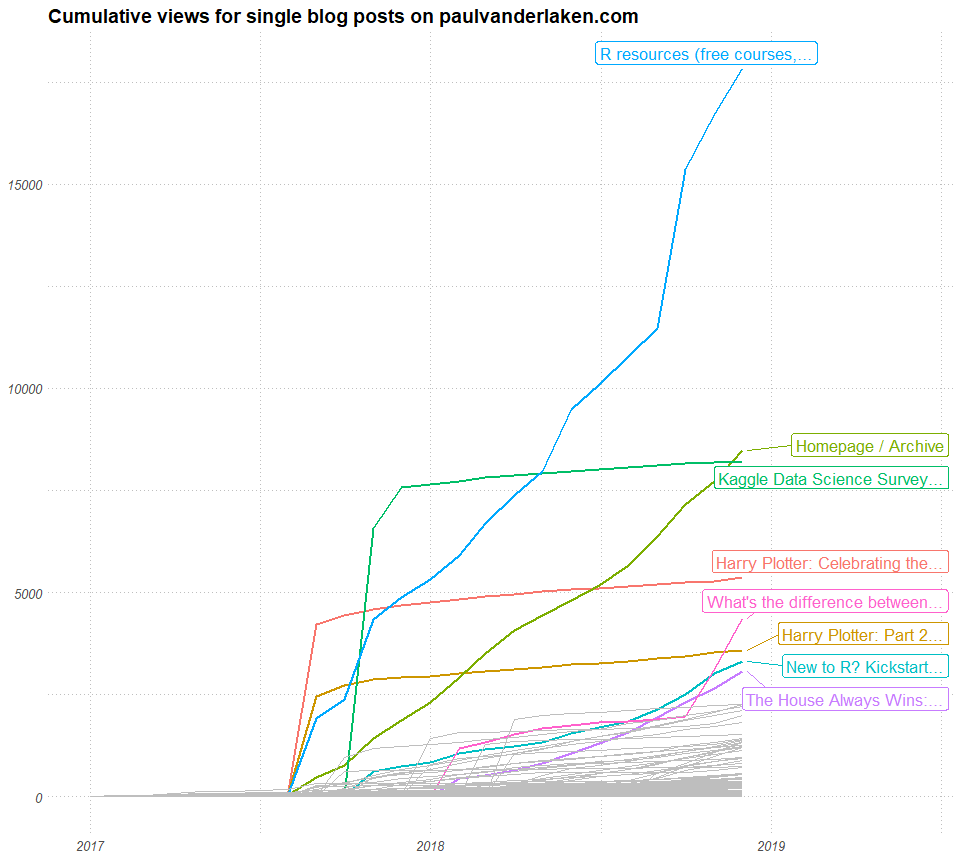

For me, the program took about 10 seconds to download one csv consisting of statistics for one day. So three years of WordPress blogging, or 1095 daily datasets of statistics, were extracted in about 3 hours. I did some nice cooking and wrote this blog in the meantime : )

Compare that to the horror of having to surf, scroll, and click that godforsaken Download data as CSV button ~1100 times!!

Final notes

The main goal of this blog was to share the basic inner workings of this scraper with you, and to give you the same tool to scrape your own visitor statistics.

Now, this project can still be improved tremendously and in many ways. For instance, with very little effort you could add some command line arguments (with argparse) so you can run this program directly or schedule it daily. My next step is to set it up to run daily on my Raspberry Pi.

An additional potential improvement: when the current script encounters no statistics do download for a specific day, no csv is saved. This makes the program try again a next time it is run, as the dates_downloaded list will not include that date. Probably this some minor smart tweaks will solve this issue.

Moreover, there are many more statistics you could scrape of your WordPress account, like external clicks, the visitors home countries, search terms, et cetera.

The above are improvement points you can further develop yourself, and if you do please share them with the greater public so we can all benefit!

For now, I am happy with these data, and will start on building some basic dashboards and visualizations to derive some insights from my visitor patterns. If you have any ideas or experiences please let me know!

I hope this walkthrough and code may have help you in getting in control of your WordPress website as well. Or that you learned a thing or two about basic web scraping with Python. I am still in the midst of starting with Python myself, so if you have any tips, tricks, feedback, or general remarks, please do let me know! I am always happy to talk code and love to start pet projects to improve my programming skills, so do reach out if you have any ideas!