For fresh R programmers, vectorization can sound awfully complicated. Consider two math problems, one vectorized, and one not:

Why on earth should R spend more time calculating one over the other? In both cases there are the same three addition operations to perform, so why the difference? This is what we will try to illustrate in this post, which is inspired on work by Naom Ross and the University of Auckland.

R behind the scenes:

R is a high-level, interpreted computer language. This means that R takes care of a lot of basic computer tasks for you. For instance, when you type i <- 5.0 you don’t have to tell R:

- That 5.0 is a floating-point number

- That i should store numeric-type data

- To find a place in memory for to put 5

- To register i as a pointer to that place in memory

You also don’t have to convert i <- 5.0 to binary code. That’s done automatically when you hit Enter. Although you are probably not aware of this, many R functions are just wrapping your input and passing it to functions of other, compiled computer languages, like C, C++, or FORTRAN, in the back end.

Now here’s the crux: If you need to run a function over a set of values, you can either pass in a vector of these values through the R function to the compiled code (math example 1), or you could call the R function repeatedly for each value separately (math example 2). If you do the latter, R has to do stuff (figuring out the above bullet points and translating the code) each time, repeatedly. However, if you call the function with a vector instead, this figuring out part only happens once. For instance, R vectors are constricted to a single data type (e.g., numeric, character) and when you use a vector instead of seperate values, R only needs to determine the input data type once, which saves time. In short, there’s no advantage to NOT organizing your data as vector.

For-loops and functional programming (*ply)

One example of how new R programmers frequently waste computing time is via memory allocation. Take the below for loop, for instance:

iterations = 10000000

system.time({

j = c()

for(i in 1:iterations){

j[i] <- i

}

})

## user system elapsed ## 3.71 0.23 3.94

Here, vector j starts out with length 1, but for each iteration in the for loop, R has to re-size the vector and re-allocate memory. Recursively, R has to find the vector in its memory, create a new vector that will fit more data, copy the old data over, insert the new data, and erase the old vector. This process gets very slow as vectors get big. Nevertheless, it is often how programmers store the results of their iterative processes. Tremendous computing power can be saved by pre-allocating vectors to fit all the values beforehand, so that R does not have to do unnecessary actions:

system.time({

j = rep(NA, iterations)

for(i in 1:iterations){

j[i] <- i

}

})

## user system elapsed ## 0.88 0.01 0.89

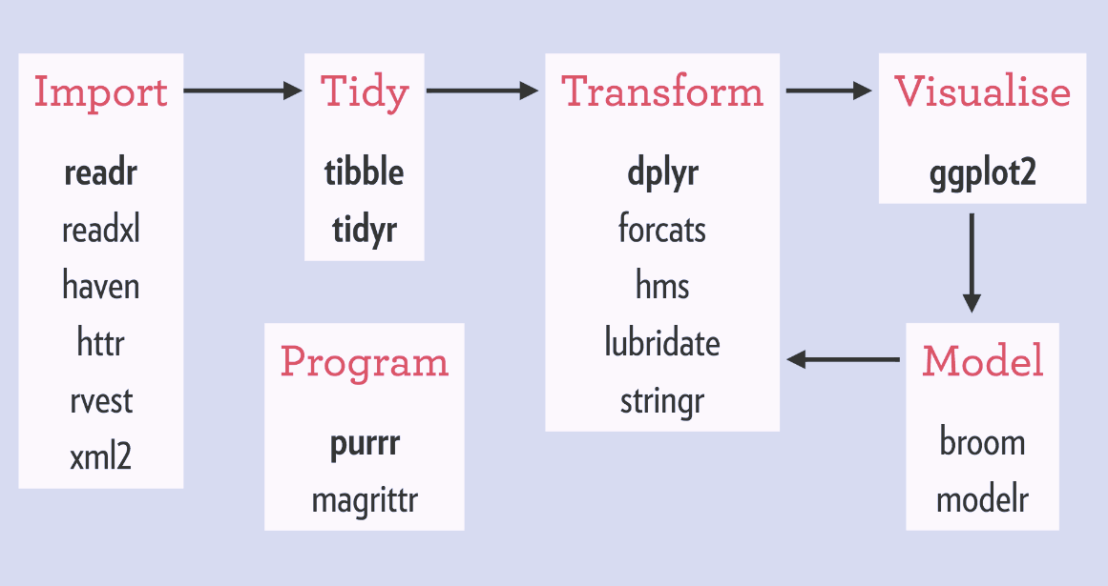

More advanced R users tend to further optimize via functionals. This family of functions takes in vectors (or matrices, or lists) of values and applies other functions to each. Examples are apply or plyr::*ply functions, which speed up R code mainly because the for loops they have inside them automatically do things like pre-allocating vector size. Functionals additionally prevent side effects: unwanted changes to your working environment, such as the remaining i after for(i in 1:10). Although this does not necessarily improve computing time, it is regarded best practice due to preventing consequent coding errors.

Conclusions

There are cases when you should use for loops. For example, the performance penalty for using a for loop instead a vector will be small if the number of iterations is relatively small, and the functions called inside your for loop are slow. Here, looping and overhead from function calls make up a small fraction of your computational time and for loops may be more intuitive or easier to read for you. Additionally, there are cases where for loops make more sense than vectorized functions: (1) when the functions you seek to apply don’t take vector arguments and (2) when each iteration is dependent on the results of previous iterations.

Another general rule: fast R code is short. If you can express what you want to do in R in a line or two, with just a few function calls that are actually calling compiled code, it’ll be more efficient than if you write long program, with the added overhead of many function calls.

Finally, there is a saying that “premature optimization is the root of all evil”. What this means is that you should not re-write your R code unless the computing time you are going to save is worth the time invested. Nevertheless, if you understand how vectorization and functional programming work, you will be able to write more faster, safer, and more transparent (short & simple) programs.